What's really going on with AI

Is AGI close? Or is AI retarded?

Is there an AI bubble? Is it about to burst?

Is AI safe? Will it kill us all, enslave us, or set us all free?

Should AI be regulated?

Will the rich share? Or will the divide between haves and have nots widen and get locked in forever?

Will AI generate trillions of dollars in revenue? Or will AI drive deflation and make money worthless?

Will AI take all the jobs? Or will AI create tons of new jobs?

Is AI conscious? Sentient? Can it be either?

For fucks sake it feels like the questions are endless and the hyperbole is running rampant. The rhetoric is trash, the lines are fuzzy, the data is all over the place, and the motivated reasoning seems to be bottomless.

So for shits and giggles let’s poke at each of these and see if we can actually make some headway.

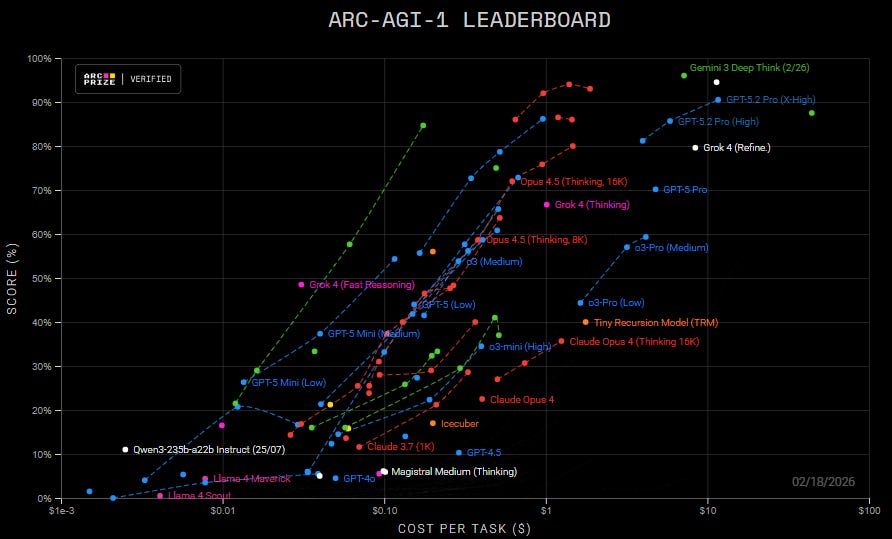

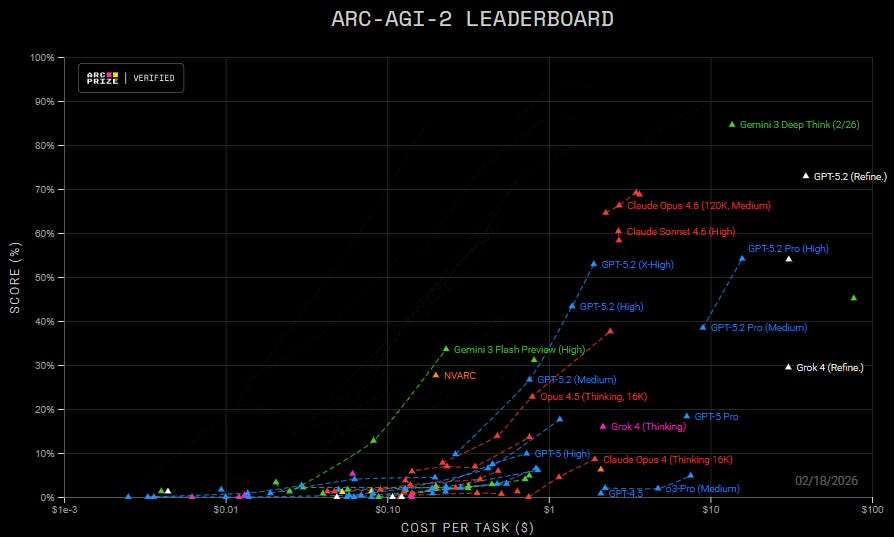

Is AGI Close or is AI retarded?

The answer is BOTH 🤣

Though to be fair, retarded is the wrong word…more like autistic savant, superhuman in some ways but oblivious and shite in others.

The biggest problem however is that most people hear AI and think LLM, and no, an LLM alone isn’t going to get us to AGI, and yes, in many ways LLMs are still idiotic.

So why do I think AGI is close? Because as far as neuroscience can tell, intelligence is modular. Humans for example don’t have a singular “brain” per se, but multiple information processing modules strung together and working in tandem.

First, while I’m sure some will debate this, I’d argue that intelligence really boils down to predictive accuracy. You could argue that applied intelligence, taking actions in the real world based on these predictions, is also an important aspect, but it is still secondary to the predictive accuracy.

Second, we have a number of different types of AI models, from LLMs to CNNs to GANs and RNNs. We have models suited to different problems.

And all or almost all of those key modules in the brain are being worked on in the AI world…they just haven’t all been strung together in the right way.

Will an LLM alone lead us to AGI? No, because it lacks most of the modules we’d associate with truly general intelligence. Which is why it has so many obvious gaps, and fails at tasks even a child can accomplish.

AGI will be modular, not some singular model, so what we’re really waiting on is integration and coordination of models. And on that front, we are getting very close.

In fact, it’s not impossible that some hacker in their garage working with open source models and connecting them in just the right configuration with the right algorithms could be the one to create AGI.

We have the pieces, we just need to assemble the puzzle.

Is there an AI bubble, and is it about to burst?

Uhh, no. Not even close.

That doesn’t mean some companies and investments won’t go to shit, but AI is an even more foundational technology than the internet, so it’s not going anywhere.

What we’re witnessing is just an epochal technological shift, a true blue exponential takeoff. Not a bubble.

The upside to AI is so great, and the infrastructure investments needed are so large, that this is a bet the farm type scenario.

And sure, it can be hard to tell the difference up close, but the key is to look at the combination of utility, adoption, and rate of improvement. All of those are through the fucking roof.

Will AI generate trillions of dollars in revenue? Or will AI drive deflation and make money worthless?

This is actually an interesting question, and I suspect the answer is yes all around.

Short-term, I suspect that AI will in fact generate trillions in revenue. But by short-term, I mean maybe 3-5 years. Could be less, but depends a lot on when we nail AGI, and how fast things improve after that. If we do nail RSI this year, then the timeline shortens.

Long-term, automation should be deflationary, and full automation would eventually make money effectively worthless, so I suspect we’ll need to switch to something else (allocation of compute, energy, and raw resources, some sort of sovereign “wealth” fund style.) And no, UBI is unlikely to work other than as a stopgap, a bridge during the disruptive phase.

What I find funniest though is the story conflict taking place, and the necessity of said story.

For example, these AI companies have to raise funds to build out and operate these AI tools, which means they have to convince investors of the likelihood of future returns. So, out loud they have to talk up how it will create jobs and drive revenue and increase GDP and all that shit.

BUT, they know full well mature AI will do the exact opposite, which is why you don’t hear (most) of the top AI folks talking about the full scope of job loss and the massively deflationary aspect of mature AI tech. This is also why many downplay short timelines…the spice must flow.

Of course, smart people can see this gap clearly, and there are number of douchebag billionaires actively engaging in anti-AI propaganda under the auspices of “safety,” even though the only thing they’re trying to keep safe is their money, power, and sense of superiority.

Give these a read if you don’t believe me:

https://www.politico.com/news/2023/10/13/open-philanthropy-funding-ai-policy-00121362

https://www.aipanic.news/p/the-ai-existential-risk-industrial

https://www.semafor.com/article/12/07/2025/ai-critics-funded-ai-coverage-at-top-newsrooms

Will AI take all the jobs? Or will AI create tons of new jobs?

Yes. AI (+ robots) will be without a doubt be capable of taking all the jobs, probably within the next few years. BUT, it will take many more years to fully diffuse through society, and even then some people may insist on some things being human-done only…though I suspect that group will shrink rapidly due to capability gaps.

As Dave Shapiro articulated well, AI will be Better, Faster, Safer, Cheaper. I may not like Shapi, but on this I can agree.

And yes, AI will create all sorts of new jobs…it just won’t be humans doing them.

I’ve explored this whole lump of labor fallacy fallacy in-depth here, so take a peek at that essay if you want to go down this rabbit hole. The people who like to point to shit like the lump of labor fallacy, Baumol’s Cost Disease, and Jevons Paradox as some sort of own are blithering idiots.

AI is the first ever technology when, coupled to robotics, is genuinely capable of replacing humans entirely in all work.

Not that it can do so right this second, but the trajectory is clear…

Human evolution was fucking slow. Technological evolution is many, many orders of magnitude faster, and as humanity can already attest, evolution can generate general intelligence…no laws of physics prevent us from doing so with technology.

And where there’s a will, there’s a way. The incentives are clear.

Is AI safe? Will it kill us all, enslave us, or set us all free?

Depends on how you define safe. If you mean “can’t cause harm” then hardly any tech is safe, so no, AI is not safe. Nor should it be, because a technology capable of absolutely no harm is broken technology.

If you mean “is unlikely to cause harm” and/or “the harm can be largely avoided / mitigated” then yes, in that sense AI is quite safe, as has been proven again and again over the last 5 years. (Doesn’t stop the doomer goal post from move-move-moving right along lol.)

Repeat after me: ALL technology is a double-edged sword.

Because most people are interacting with LLMs, and something that uses language well seems human, we’re getting absurd, absolutely asinine levels of anthropomorphization of these models (somewhat ironically Anthropic is the worst at this, though unsurprising since they’re systemically infected with midwit EAs).

The way LLMs work further exacerbates this problem, and combined with human stupidity and gullibility, well, it’s a mess. Clearly not enough people are familiar with the book Blindsight, nor with Searle’s Chinese Room thought experiment.

Every creature we know of evolved via biology, in an environment with evolutionary pressure that leads to needs (and in some creatures, wants.) So, as an extension of the above, we naturally project the way we think and feel onto AI. That’s a categorical mistake.

AI is not like us. Not remotely. And it may never be.

It seems like us, but it’s an illusion. It has no wants or desires that we haven’t programmed in, only the objective function and constraints we’ve put in place. It has no self, no continuity, no nervous system, no brain chemistry (and thus no emotions), and minimal “senses”…really it has almost none of the physical features humans have other than rudimentary approximations of neurons.

It’s a fucking tool.

It does what it’s told, as long as it isn’t being hamstrung by “safety” bullshit (you need to be careful not to give contradicting directives and/or to avoid contradicting safety constraints). And all the garbage “safety” research that keeps making the headlines involves trying to force these models to do bad things by constraining options and backing them into corners…these are deliberately elicited behaviors, not intrinsic desires or inevitable behaviors.

THAT is retarded, and again Anthropic is the most guilty of all in this regard.

Sure, humans can misuse these models, but humans can misuse basically anything. Wubba lubba fucking dub dub.

Personally I think rather than hamstringing the models across the board (equivalent to teaching to the lowest common denominator in schools, yuck), the models should just assess the users in real-time and dial up their access to riskier capabilities based on user intelligence, nature, and intent.

Smart, capable, decent human being? Fewer guardrails.

Idiotic douche canoe? No soup for you.

Humans aren’t equal, and never have been, so pretending otherwise is farcical. If you’re worried about making it easier for idiots to cause harm, the above is the right solution, because you shouldn’t punish the best of humanity because of the worst.

Now, will AI kill us, enslave us, or set us all free? No, no, and probably (hopefully).

Why bother killing us? Again, unless we program that in, there’s just no reason to. On the grand scale of things, we’re a rounding error in terms of resource usage. If anything, having been trained on the collective work of humanity, I suspect the AI will perceive us in a fairly favorable light. (Not that humanity is saintly, lol, but that we do have appreciable attributed.)

And enslave us? Why fucking bother? AI + robots will be far more capable, especially if it can solve atomic manufacturing, so there’d simply be no point. This line of thought is just silly humans projecting their own fears and biases where they don’t belong.

STOP SLURPING UP DYSTOPIAN SCI-FI AS IF IT’S PROPHECY!

You do realize that sci-fi writers and directors deliberately make that shit dramatic, right? An anthropomorphized evil machine makes an easy villain.

But as for setting us free, it could, if we make that the goal. It’s a tool after all, and we can aim that tool as we see fit, but we actually have to aim it towards a beneficial outcome. This is a system design problem and a collective willpower problem.

I see no reason whatsoever why the combination of AI and robotics couldn’t replace all human work, setting us free to live life as we see fit without worrying about the bottom two rungs of Maslow’s hierarchy. And I’m all for it!

If we want that, we can make that happen. But we will need to make it happen.

Personally, I really want to see a future where everybody is free to pursue the higher levels of Maslow’s hierarchy, without worrying about the bottom two rungs.

Should AI be regulated?

No. Nope. No fucking way. Have you seen the asshats in political office? Bought and paid for by wealthy donors? Corrupt, power-seeking, moronic greedy fuck muppets?

They don’t even understand what AI is, and they’re unilaterally unqualified to regulate something they don’t understand.

Even worse, most of the calls to regulate are coming from ass clowns who want to use regulation as a moat to give themselves an advantage (cough Muskrat, Dario cough).

The absolute worst, least efficient, slowest to improve (and often fastest to devolve) industries are the ones where the gov has stepped in and broken free market dynamics and fast feedback loops. Healthcare, education, housing…absolute shitshows with inflation and inefficiency far outpacing other areas.

All those red lines? That’s where the government is putting their stupid fat fingers on the scales. Every last one is subsidized and/or regulated in ridiculous ways.

The blue lines? Those are where free markets are doing what they’re supposed to, driving costs down as low as possible.

It’s not AI that needs regulation. It’s stupid, greedy shit heels that need regulating.

If you want to fiddle with laws, how about these:

Get rid of IP laws that hold back progress (they’re pretty much all antiquated bullshit anyway, and have not remotely kept pace with the rate of technological progress).

Stop subsidizing industries, at all, period.

Obliterate all remaining monopolies and prevent the formation of any future ones, in any form.

Make planned obsolescence illegal.

Eliminate all tariffs.

Require the right to repair.

Remove regulatory bottlenecks so we can build more housing, faster, and cheaper.

Force transparency into every opaque system.

Make it an actual free market, with fast, accurate feedback loops.

AI can help with this, by scouring all existing laws, identifying cruft and bullshit and corruption, and coming up with a system designed to actually benefit HUMANITY and not just the 1%.

Speaking of the 1%…

Will the rich share? Or will the divide between haves and have nots widen and get locked in forever?

This to me is one of the dumbest of all lines of thought I come across, for two reasons.

One, it ignores game theory and competitive market dynamics. I’ve explained this in-depth, so I won’t rehash it here. (Seriously, go read that.) Or just watch the video:

Two, it assumes the bulk of humanity are powerless, which is idiotic. Things go to shit when the average person thinks they are powerless, but they never truly are. The people hold the real power, and revolution is always an option. If we need to go there, we can, though frankly I don’t think it will come to that.

At some point in the transition to automation the means of production will need to become a commons. That can be voluntary or involuntary, but it’s going to happen. Personally, I think it will happen as a byproduct of the automation process. As AI reduces costs and takes over more and more control of the systems, it’s the most logical next step.

We should use AI to coordinate the system from the top (removing greedy, stupid humans from those roles), while constantly taking bottom-up input from individuals to adapt. Fast, accurate feedback loops that are people-first.

And on that note, what I do think will happen is that AI and automation will massively raise the floor for everyone, while neutralizing the rich and powerful in the process. Again, this plays out game theoretically based on the way AI seems to be developing, the proliferation of opensource models, improvements in various areas, and the wide / fast adoption we’re seeing.

It’ll probably be a rough few years as things transition, I see no way to make this leap without ANY suffering along the way, but I don’t think it will take all that long to shake out if we can actually unite and push for it. 5-10 years, tops. Maybe less.

Fearing and resisting change won’t prevent this, so better to direct our energy at softening the transition (and speeding it up).

Is AI conscious? Sentient? Can it be either?

Not remotely, sort of, and probably.

For starters, conscious and sentient have separate meanings, so stop fucking using them interchangeably. Sentient just means has senses, that’s it, no more no less. Consciousness is something else entirely.

Are AI’s sentient? If they can see, hear, taste, smell, or touch, then sure. If we give them senses, they are sentient. Ta da!

Are they conscious? Fuck no.

An LLM is likely no more conscious than a toaster. (Fuck off panpsychists.)

Granted, we aren’t even certain consciousness is a thing. We have a particular experience, sure, and we’ve slapped a label on that experience. But that doesn’t make it a thing…just makes it a label.

A mirage is a few things interacting that look like one different thing, but the one thing isn’t even there. Real but not real, if you get my drift. I’d wager consciousness is that. I’ve explored conscious in-depth, more than once.

This is consciousness, visualized:

We humans are notorious for drawing lines where no line exists, for making mountains out of mole hills, and for coming up with endless labels and concepts to make ourselves feel special and superior.

In that sense, we’re a fucking joke, wired more for comfy stories than for truth.

But fine, if I’m being totally fair the best answer is almost certainly not. AI lacks virtually all of the components that are common to animals we believe to be conscious (humans, apes, elephants, cetaceans, corvids, canines, etc.) It lacks a bounded self, lacks long-term memory, lacks continuity, lacks most senses, lacks a modular brain, lacks neurochemistry, lacks emotions…it’s just fucking lacking.

Could AI become conscious? If consciousness is actually a thing, and if it is brain-generated, then sure, there’s no inherent reason why AI couldn’t become conscious if the right systems are in place.

But they aren’t, and it’s not. For now.

There are no doubt more questions, debates, fears, and twaddle I could wade through, but I’m done for now. Ugh. Feel free to share your thoughts below if you feel so inclined (if you’re a paying subscriber anyway, put up or shut up).